Why Bigger Isn’t Always Smarter

Can Small Language Models Outperform the Giants?

Like many organizations, we began our AI journey by experimenting with internal developments. We built prototypes, tested various use cases, and quickly recognized the value AI can bring to software delivery, analysis, and decision-making.

The next logical step was to scale adoption, so we provided colleagues with access to a leading large language model. The uptake was immediate. Productivity increased, ideas flowed faster, and teams began embedding AI into their everyday workflows.

Then came a reality check

By the middle of the month, our usage quota was already exhausted.

This highlighted an important insight:

AI usage doesn’t grow linearly — it grows exponentially.

As more employees adopt it and more use cases emerge, costs can quickly spiral. The key question becomes: how do we scale AI in a sustainable way?

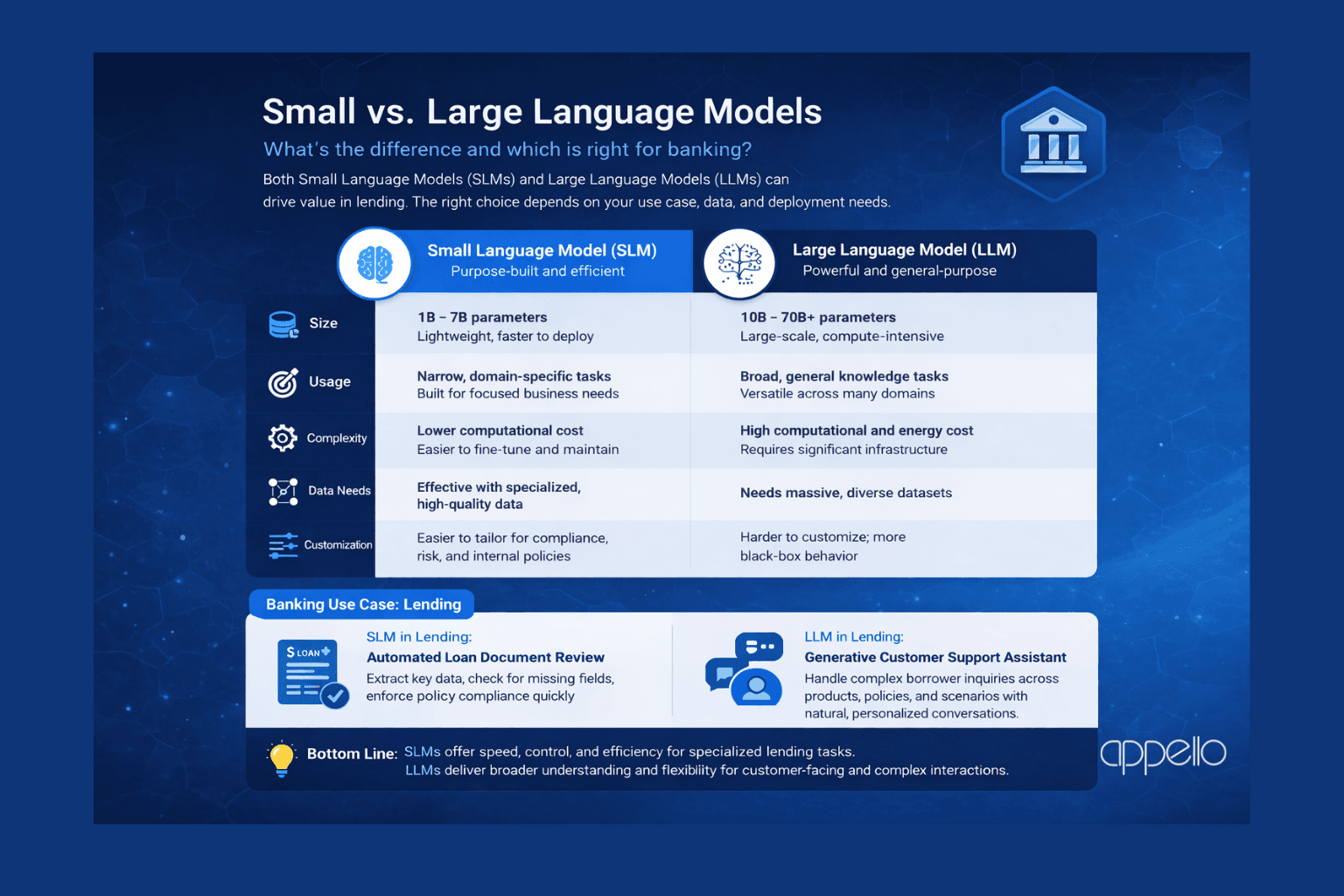

One promising answer lies in the rise of Small Language Models (SLMs). These are lightweight versions of large language models, specialized for defined tasks and capable of operating efficiently even in resource-constrained environments.

Unlike large, general-purpose models, SLMs are:

More cost-efficient and faster to deploy

Easier to fine-tune for specific business domains

Suitable for on-premise or private cloud environments

For many enterprise use cases, they are not just “good enough” — they are often better aligned with business needs. They also help avoid ineffective vendor lock-in, giving organizations greater flexibility and control.

We are already seeing strong adoption patterns across industries.

Software providers use SLMs for:

Code generation in controlled environments

Internal documentation search and summarization

DevOps automation and ticket triaging

Banks and financial institutions apply them for:

Credit analysis support

Document processing (loan files, contracts)

Customer communication assistance

Regulatory and compliance checks

These are high-volume, repeatable, domain-specific tasks — exactly where SLMs excel.

The future of enterprise AI is not about using the biggest model everywhere, but about choosing the right model for the right job. Balancing performance, cost, and control will define the next phase of AI adoption across banking and software.

In that future, Small Language Models will play a key role.